This post was inspired by a Sunday brunch with an intelligent young programmer from CERN. I was struck by his pessimistic—and arguably realistic—view on the future of software engineering. He argued strongly that AI has already made human coding obsolete. As the conversation unfolded, however, a more optimistic picture emerged. While traditional coding may be fading, a new niche is opening up. The real skill will lie in how we embed, configure, and optimize AI, a shift that demands entirely new talents and approaches. I supported this perspective with my own experience transforming Diplo’s software team over the past year, since advanced AI took over much of our coding work. What is your take? You can now describe an app in plain language and get working code back in seconds. This practice is often called ‘vibe coding, and it has become so widespread that it was named Collins Dictionary Word of the Year 2025. If tools like Claude Code or Cursor can produce code in seconds, it naturally raises questions about the future of software development. For years, software developers were the ‘priests’ of the digital economy. The most valued skills were, among others, knowledge of syntax, mastery of object-oriented patterns, and line-by-line code review. Today, AI can do much of that faster—and often with fewer mistakes. The bad news is that a large part of what developers were trained to do is becoming obsolete. The good news is that developers no longer need to spend so much time on the boring parts: writing boilerplate, hunting trivial bugs, and doing repetitive code reviews. So what’s left? How do developers stay relevant and employable? Like many other professions, developers will be in a race with AI—finding niches where humans still add unique value. Here are a few areas (at least for now) where developers can still have a clear reason d’être. Developers have long acted as translators, converting the messy reality of human needs into logical machine code. Now the role is reversing: developers increasingly need to adapt AI-generated code to the still-messy human world. This requires less pure logic and far more emotional intelligence. Code is neat. Society isn’t. That is why the AI era will reward developers who can listen carefully, ask better questions, and understand what users actually mean—not just what they say. The hardest problems will often sit outside the code: conflicting expectations, fear of change, unclear goals, organisational politics, and the gap between what people want and what systems can safely do. This transition won’t be easy. Many developers were trained inside a technical bubble, almost like a priesthood. Stepping more fully into the human world of ambiguity and trade-offs can feel uncomfortable at first. That is also why hiring signals may shift. Instead of focusing only on credentials and tool mastery, employers may increasingly value evidence of empathy and imagination. Artistic interests—writing, music, painting—can be strong indicators of the ability to understand other people. Knowing JavaScript will still help, but AI is increasingly better at it. What AI struggles with is the human side of building technology. In my own recruitment activities, I increasingly look for philosophers, artists, and theologians whose training embraces the complexity of the human condition.

Bridging the gap between code and reality

Coding: from mantra to irrelevance

In the early 2010s, coding reached peak cultural status. Hundreds of hackathons and initiatives such as “coding for girls” and “coding for youth” flourished. Even today, institutions like EPFL run girls’ coding clubs.

But as pure coding becomes increasingly automated, the next wave of education may shift focus. Future initiatives might emphasise critical thinking, philosophy, and literature—disciplines that will remain essential for as long as humanity exists, especially in our evolving race with AI.

AI creates a paradox of plenty: if we can build almost anything, what should we build? That abundance triggers an unsettling kind of choice overload. Ultimately, someone still has to decide—set priorities, accept trade-offs, and say no to most possibilities.

This is where engineers can help strike a balance between user expectations, technical options, and available resources. In big organisations, the challenge becomes even sharper because individual needs collide with institutional dynamics. Priorities conflict, goals change, and “requirements” often contain hidden assumptions or policy compromises.

As AI development becomes easier, the scarce skill becomes judgment: shaping the right problem, defining success, deciding what not to automate, and spotting where technology creates new risks. The developer who can guide that conversation becomes far more valuable than the developer who only executes coding tasks.

AI doesn’t just generate code. It also makes systems more interconnected. Modern applications are less like single products and more like networks of services: APIs, agents, connectors, and modules spread across vendors, servers, and internal platforms. That interdependence offers convenience, but it also brings fragility.

When many pieces rely on each other, vulnerabilities multiply, failures become harder to diagnose, and accountability becomes blurry. In this environment, developers can remain essential as orchestrators of complexity. Their job is to know what should connect to what, how to preserve data integrity and privacy, and how to design systems that degrade gracefully when something breaks.

They become the people who can keep a system understandable, observable, and resilient—especially when a model changes behaviour, a vendor updates an API, or a small failure cascades into a larger incident.

Some roles remain human-centric, for now.

Debugging is one example. AI-generated code isn’t perfect, and human ultimate oversight still matters, though this window may narrow over the next 1–3 years.

Security audits are another. The initial AI gold rush prioritised capability over security, and risks such as AI-generated SQL injections are only now emerging. While the industry is shifting focus, human-led security review may remain critical for the next few years.

Many software developers will struggle. A smaller group will do exceptionally well, especially those who build strong non-coding skills: communication, empathy, product thinking, systems thinking, and judgment under uncertainty.

If you want one simple habit that helps with this transition, read fiction and poetry regularly. Fiction trains perspective-taking and an understanding of human motives—exactly what technical education often neglects.

And if you are choosing a career, consider philosophy, literature, history, theology, psychology—any discipline that teaches you how humans think, cooperate, fight, dream, and fail. Those skills will remain relevant as long as humanity exists, especially in a world where AI can write code but still cannot fully replace human logical, emotional, and intuitive cognition. Even if AI can create our ‘replicas’, which is not very likely, humans should and must remain ‘in charge’.

Technology, content, and human interaction have shaped DiploAI’s work in delivering courses, conducting research, and developing apps and tools. Over the past three years, we have seen a major shift: in 2023, our focus was largely technological—installing and experimenting with LLMs and AI applications. Today, AI technology is increasingly commoditified. There are thousands of LLMs with marginal differences in their performances. What makes a real difference is the customisation of AI for specific needs and context. Technical customisation of creating RAGs and agents has been automated, reducing the need for extensive coding by programmers.

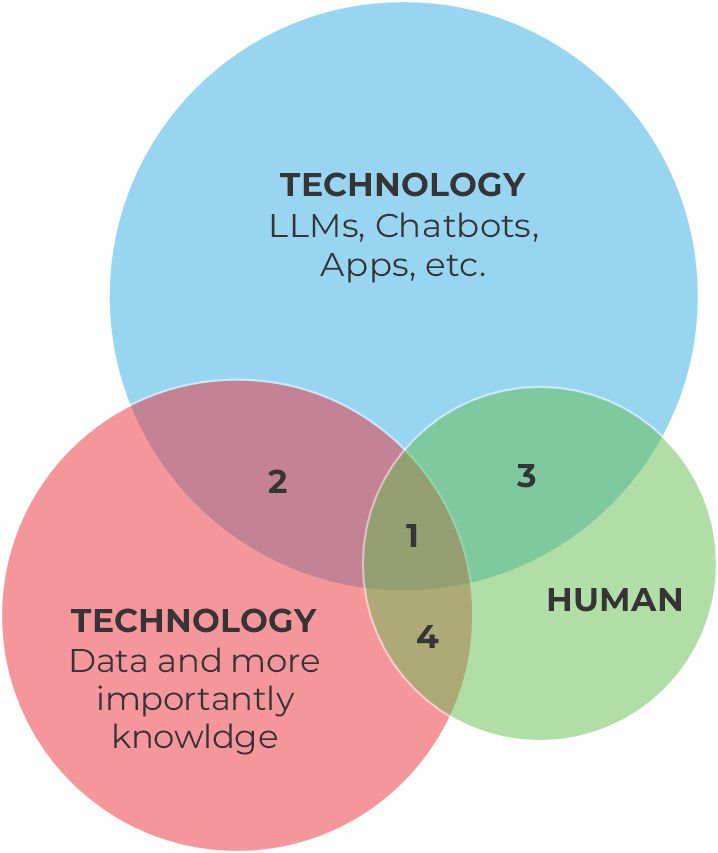

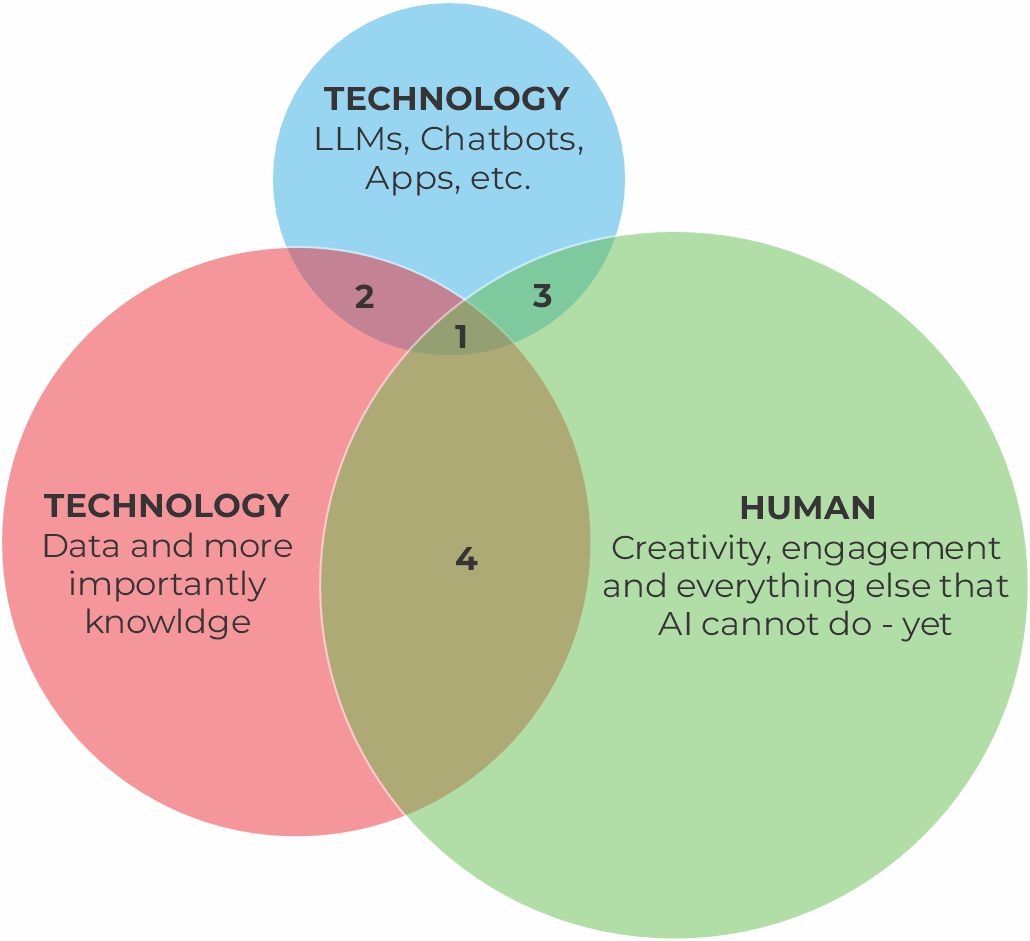

Overall, to paraphrase an old saying, technology remains necessary, but it is not any more a decisive factor for successful AI as illustrated below

Timeline: 2023 (technology-led) → 2026 (content- and human-led)

The determinant of overall AI success has shifted from technology to content, in particular, knowledge, and human interactions. The optimal AI transformation (the “core” area, or 1) is increasingly shaped by critical interplays (2–4) in the core of Diplo’s cognitive proximity approach: how to nurture productive and creative working relationships between humans, and between humans and machines.

1. Core (Technology × Content × Human interaction)

The right orchestration among three aspects requires constant and agile adaptation—continuously recalibrating what should be handled by tools, what depends on knowledge resources, and what needs human input. Over the last few years, we have found that frequent organisational adaptation goes against two human needs: hierarchy and predictability.

2026 priorities:

2. Technology × Content

The key area here is data and knowledge preparation, especially data labelling through hypertext annotation and evaluating AI inference against an expert-defined ‘ground truth’ benchmark (e.g., what is the correct answer to a specific question).

2026 priorities:

3. Technology × Human

After realising the relevance of the human-technology interplay, we started experimenting with different pedagogies to help humans grasp the probabilistic yet engineering nature of AI. After intensive research and testing, we designed an AI Apprenticeship pedagogy, which was deployed 4 times over the last 14 months, resulting in more than 100 successful apprentices. Apprentices, including Diplo staff, have a deeper understanding of the full AI pipeline—from document selection and model behaviour to inference, prompting, and evaluation.

2026 priorities:

4. Content × Human

Human expertise remains indispensable. Experts can use AI as a desk researcher and assistant, but they are—and should remain—the primary source of substantive content. Knowledge is not simply ‘distilled’ from data; it emerges through conversation, interpretation, and negotiation between humans (and, increasingly, humans and AI) in specific contexts—consistent with our cognitive proximity approach.

2026 priorities:

In 2026, DiploAI’s priority will be to further develop our ‘cognitive proximity’ among humans and between humans and machines to ensure human-centric AI. We will build on our comparative advantages: