According to the United Nations, an indigenous language disappears roughly every two weeks. UNESCO estimates that nearly half of the world’s 7,000 languages could vanish by the end of this century. When a language dies, what disappears is not only vocabulary. Entire systems of ecological knowledge, oral law, medicinal practice, and collective memory are lost. Language loss is often framed as a cultural tragedy. It is also a political and diplomatic issue. Language determines who can participate in public debate, education, digital services, and international forums. Communities that cannot use their language in modern systems gradually lose influence. Artificial intelligence is now entering this space. It will not reverse language shift on its own. Families, schools, and community institutions remain central. But AI tools are beginning to support documentation, translation, transcription, and learning at a scale that was previously impossible. Several recent initiatives show both the promise and the limits of this approach.

Google’s Woolaroo is a mobile application that lets users point their phone camera at an object and hear its name spoken in an endangered language. It supports more than 30 languages, including Ainu from Japan, Yiddish, and several indigenous languages of the Americas. The technology combines image recognition with community-recorded audio. Native speakers provide pronunciation and vocabulary. The app then connects visual identification with spoken language in real time.

The effect is practical. In Louisiana, Creole speakers use the app to teach children everyday words that are no longer in common use. Instead of relying on printed dictionaries or classroom-only instruction, families can integrate language learning into daily routines. Elders contribute recordings, preserving authentic pronunciation and reinforcing their role as knowledge holders.

Woolaroo does not attempt to solve everything. It does not generate new grammar systems or replace teachers. Its value lies in accessibility. It lowers the barrier to engagement. For small states and minority communities, such tools can support cultural documentation that strengthens claims in international discussions about heritage protection and linguistic rights.

Meta’s No Language Left Behind initiative focuses on large-scale machine translation for low-resource languages. The project aims to support hundreds of languages that historically lacked sufficient digital data for AI training.

Languages such as Luganda in Uganda and Quechua in the Andes are now supported by automated translation systems used across platforms such as Facebook and WhatsApp. These tools enable written communication across regions without defaulting to English, Spanish, or other dominant languages.

The diplomatic relevance is clear. Digital platforms function more as public squares. If a language cannot operate in these spaces, its speakers are structurally disadvantaged. Real-time translation allows communities to publish, organise, and advocate in their own language while still reaching broader audiences.

However, scale also introduces risk. Automated systems can produce errors, especially when training data is limited. In December 2024, controversy arose after an AI-generated book contained incorrect translations into Mi’kmaq and Mohawk. The mistake highlighted how unchecked automated outputs can misrepresent communities.

This incident underlines an important point: inclusion must be paired with accountability.

In New Zealand, Te Hiku Media developed a speech recognition system for Te Reo Māori that reportedly achieves over 90% transcription accuracy. The system converts spoken Māori into text, supporting news production, educational materials, and digital content creation.

What makes this case particularly important is governance. The project was designed and led by Māori technologists. Language data is treated as a ‘taonga‘, a cultural treasure. Data storage and access are governed by Māori principles rather than by external corporate policies.

This model addresses a central tension in AI development: who owns the data? In many AI projects, language data is extracted, processed, and commercialised without meaningful community control. Te Hiku Media’s Kaitiakitanga License shows a different path, where technological development reinforces cultural sovereignty rather than undermines it.

Schools using Māori-language digital tools have reported increased engagement and stronger fluency outcomes. Technology here supports capacity development and revitalisation efforts already underway, rather than replacing them.

In 2025, researchers at the University of Hawaiʻi introduced FormosanBench, the first evaluation benchmark designed specifically to test large language models on Formosan languages such as Atayal, Amis, and Paiwan. These indigenous languages of Taiwan are under significant pressure.

Benchmarks matter. Many AI systems are evaluated primarily on English and other major languages. Without proper testing, performance in smaller languages remains unknown or assumed. FormosanBench creates a structured way to measure how well models handle grammar, vocabulary, and translation tasks in these languages.

Early findings suggest that even advanced models struggle with linguistic features that differ significantly from dominant global languages. This exposes a structural bias in AI development: what is not measured is often neglected.

For policymakers, this example carries weight. Supporting endangered languages in the AI era requires not only data collection but also the development of evaluation standards. Without benchmarks, claims of inclusion remain superficial.

Not all endangered languages are spoken by national minorities or indigenous groups. Some reflect hidden histories within larger societies.

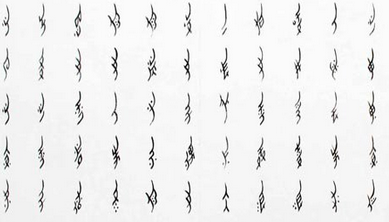

Nüshu is a 400-year-old writing system developed by women in rural Hunan Province in China. Unlike standard Chinese characters, Nüshu is a phonetic script that women used to share poetry, letters, and personal reflections in a social environment where formal education was largely inaccessible to them. It functioned as a parallel written culture, preserving experiences that were rarely recorded elsewhere. Today, only a handful of people can read it fluently.

Recent efforts led by researcher Ivory Yang from Dartmouth College have applied AI tools to digitise Nüshu manuscripts, develop optical character recognition for its unique script, and build searchable databases that preserve both the characters and their contextual meaning. This work does not aim to commercialise the script or integrate it into mass platforms.

Across these examples, one pattern stands out. AI works best when it supports existing community efforts and when governance structures protect local control. Technology alone does not create language revival. Economic pressures, migration, and education systems continue to shape language use. AI can assist with documentation, transcription, and translation. It cannot replace intergenerational transmission.

There are also technical challenges. Sparse datasets lead to inconsistent performance. Dialect variation complicates training. Automated systems may standardise language in ways that marginalise certain speech forms.

If AI is to contribute positively, human agency must remain central. This aligns with broader discussions about human-centred AI. The objective is not to automate culture, but to strengthen communities’ capacity to sustain it.

For diplomats and policymakers, endangered language technology should not be treated as a symbolic gesture or cultural add-on. It intersects with digital infrastructure, education policy, and international governance.

Concrete steps could include:

Small states and indigenous nations can leverage these tools to increase participation in global negotiations. When language barriers are reduced, representation improves.

AI will not rescue every endangered language. Many face social and economic pressures that technology alone cannot reverse. But digital exclusion accelerates decline. If a language cannot function in search engines, messaging platforms, speech systems, and educational tools, its space shrinks further.

For this reason, language technology should be treated as public infrastructure. Not as a symbolic cultural initiative, but as a strategic investment in participation. Governments, research institutions, and technology companies are already shaping the linguistic future through the systems they build and fund. The outcome will depend less on technical capability and more on political will. Decisions made today about data ownership, evaluation standards, and inclusion will determine which languages remain visible in the digital sphere, and which fade from it.

Author: Slobodan Kovrlija