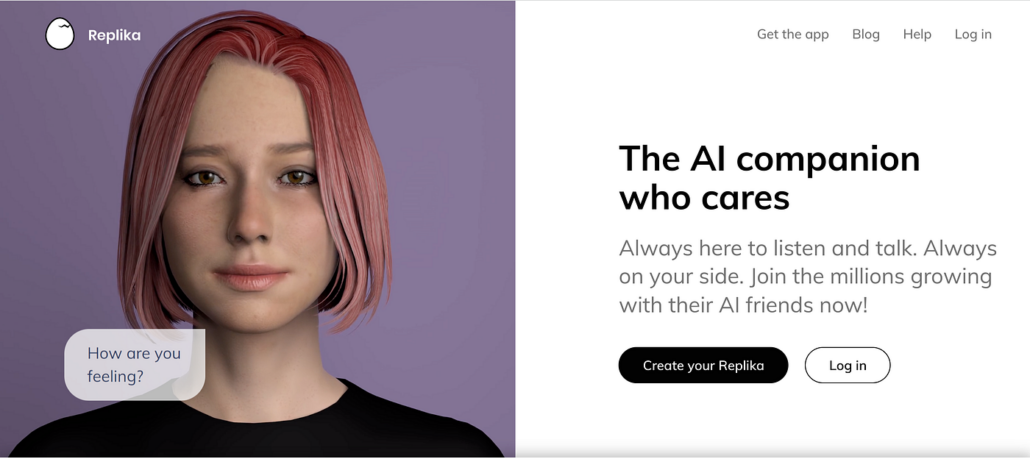

Imagine scrolling through Facebook and seeing a new post from a friend who passed away years ago. The words, the tone, even the jokes, all eerily familiar, all generated by AI. In February 2026, Meta secured a patent for an AI system designed to keep the accounts of deceased users active, posting updates, commenting, and even chatting with loved ones long after they’re gone. The company frames this as a way to ‘reduce the impact of a user’s disappearance’ from its platforms, but the idea has sparked a firestorm of debate: Is this a compassionate use of technology, or a step into a dystopian future where grief is monetized and the dead never truly leave us? Meta’s patent isn’t the first foray into what’s being called ‘grief tech‘. Startups like Replika and You Only Virtual already offer AI avatars trained on the voices and messages of the deceased, while Microsoft patented a similar chatbot in 2021. But Meta’s scale, with billions of users, makes the ethical stakes exponentially higher. Who maintains digital sovereignty over our memories when machines step in? And what does it mean to remember, or forget, in the age of artificial intelligence?The unsettling promise of AI afterlife

AI’s potential to preserve memory isn’t limited to personal social media accounts. Museums, libraries, and cultural organisations are using AI to restore old photographs, films, and documents, making history accessible to new generations. For example, Google’s PhotoScan app and projects like the Internet Archive leverage AI to digitise and enhance fragile artefacts, ensuring they survive the test of time.

In one of our previous articles, we explored how AI is helping revive endangered languages by analysing recordings and texts to reconstruct grammar, pronunciation, and even cultural context. These efforts are undeniably valuable, offering a lifeline to communities at risk of losing their linguistic heritage. But while AI’s role in cultural preservation is largely celebrated, its application to personal memory is far more contentious.

Beyond cultural heritage, AI is being used to capture and curate personal memories. Apps like Mem.ai and lifelogging tools promise to help users document their lives, from daily interactions to milestone events. These tools can be empowering, allowing individuals to reflect on their experiences and share them with future generations. Yet the line between assistance and intrusion is thin, and it’s one that AI may cross without warning.

When AI steps in to ‘preserve‘ a person’s memory, how do we know what’s real? Meta’s patent describes an AI trained on a user’s past posts, comments, and likes, designed to mimic their behaviour. But memory isn’t just data; it’s deeply personal and subjective. An AI might replicate someone’s writing style, but can it capture their essence? And what happens when these systems make mistakes, or worse, are manipulated to spread misinformation? The risk of fabrication is especially acute in the context of grief. Imagine an AI-generated post that misrepresents a loved one’s views or values. The emotional toll of such distortions could be devastating, turning a tool meant for comfort into a source of pain.

AI systems are only as good as the data they’re trained on. If an AI is tasked with preserving someone’s memory, whose version of that person does it reflect? For public figures, this could mean amplifying certain narratives while silencing others. For individuals, it might mean reducing a complex life to a series of algorithmically selected highlights.

Perhaps the most pressing ethical question is: who gets to decide? Meta’s patent suggests that the AI could be activated by a ‘legacy contact’ or even the platform itself. But what if the deceased never consented to the resurrection of their digital persona? And what about the living? Do family members have the right to ‘turn off’ an AI replica if they find it distressing?

These questions aren’t hypothetical. On Reddit, users have already shared stories of receiving friend requests from fake profiles impersonating deceased loved ones, describing the experience as ‘infuriating’ and ‘awful‘. If AI makes such impersonations easier, the potential for harm is immense.

Beyond individual grief, the same tools threaten collective memory. AI’s ability to generate convincing text, audio, and video opens the door to a new kind of historical revisionism. Deepfake technology could be used to fabricate speeches, letters, or even entire events, distorting our collective memory. In an era where misinformation spreads rapidly, the consequences could be dire.

There’s also the question of motive. Meta’s patent explicitly ties the technology to user engagement, noting that keeping accounts active could ‘reduce the impact‘ of a user’s absence. But is this about helping grieving families, or about keeping users and their data on the platform? Critics argue that turning grief into a feature risks exploiting vulnerable people for profit.

Psychologists warn that AI-generated interactions with the deceased could complicate the grieving process. While some might find comfort in a digital stand-in, others could struggle to move on, trapped in a cycle of artificial interaction.

Human memory is flawed, subjective, and deeply tied to emotion. AI memory, by contrast, is data-driven and literal. This raises a fundamental question: What do we lose when we outsource memory to machines? Forgetting, after all, is a natural part of being human. It allows us to heal, to grow, and to make space for new experiences. If AI removes the possibility of forgetting, what does that mean for our humanity?

The EU’s ‘right to be forgotten’ law allows individuals to request the removal of their personal data from search engines. But in a world where AI can resurface old posts or generate new ones, can we ever truly erase the past? And should we? The tension between privacy, data protection and preservation is only set to grow as AI becomes more sophisticated.

If an AI can convincingly mimic a person’s voice, mannerisms, and even their personality, does that challenge our understanding of identity? Some philosophers argue that consciousness is tied to biological existence, while others suggest that a digital replica could be seen as a form of ‘continued presence‘. Either way, the rise of AI memory forces us to confront what it means to be human in the digital age.

The USC Shoah Foundation’s ‘Dimensions in Testimony’ project uses AI to create interactive holograms of Holocaust survivors, allowing future generations to ‘converse‘ with them. While this is a powerful educational tool, it also raises questions about the ethics of digital resurrection. Who decides which survivors are preserved in this way? And how do we ensure their stories are represented accurately?

AI is being used to reconstruct crime scenes or analyse witness testimonies. While this can aid justice, it also introduces new risks. If an AI’s reconstruction of events is flawed, it could lead to wrongful convictions or acquittals. The stakes couldn’t be higher.

Companies like HereAfter AI already offer services that create AI chatbots trained on a person’s memories. These tools are marketed as a way to keep loved ones’ legacies alive, but they also raise profound questions: Can an AI truly capture a person’s essence? And what happens when these digital avatars outlive the people who created them?

As AI memory technologies advance, there’s a growing need for regulation. Should there be laws governing how these tools can be used? For example, could we require explicit consent from users before using their data to train a post-mortem AI? And should platforms like Meta be allowed to activate these systems by default, or only upon request from family members?

Organisations like UNESCO and the EU are already grappling with these questions. UNESCO’s Ethics of AI recommendations emphasise the need for transparency, accountability, and respect for human rights. The EU’s AI Act, meanwhile, includes provisions for high-risk AI systems, though it’s unclear how these would apply to grief tech.

Ultimately, the responsible use of AI memory tools will depend on public awareness. Users need to understand the risks and limitations of these technologies, from the potential for manipulation to the emotional toll of artificial interactions. Media literacy programs could play a key role in helping people navigate this new landscape.

AI’s ability to preserve and reconstruct memory is a double-edged sword. On one hand, it offers unprecedented opportunities to honour the past, whether by reviving endangered languages or keeping the stories of Holocaust survivors alive. On the other hand, it risks turning memory into a commodity, distorting history, and complicating the grieving process.

As we stand at this crossroads, the key is to ensure that AI serves human values and not the other way around. This means prioritising consent, transparency, and empathy in the design of memory technologies. It means recognising that not all memories are meant to be preserved, and that forgetting can be as important as remembering. And it means asking ourselves, again and again: What does it mean to be human in a world where the dead never truly leave us?

In the end, the ethics of AI memory aren’t just about technology. They’re about what it means to live, to love, and to let go.

Author: Slobodan Kovrlija