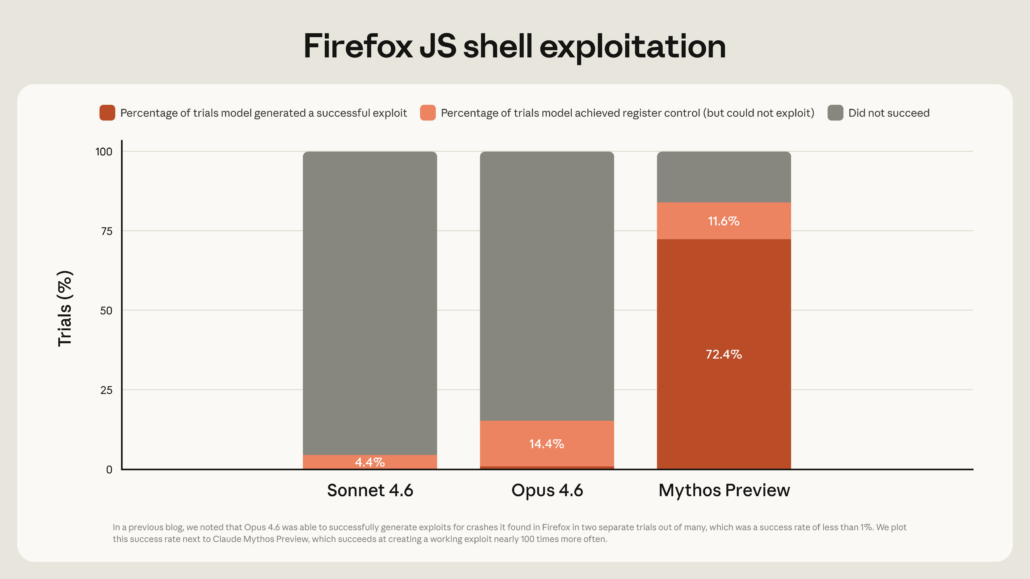

How one cyber‑offensive model should change AI governance? The news about AI model developments and breakthroughs in capabilities and speed is almost daily, to the point that one hardly pays attention anymore. It is hard to pluck out what matters from all the noise. But last week saw something that really stands out: Anthropic unveiled its Mythos model, described by the UK AI Safety Institute as among the most capable cyber‑offensive AI systems it has assessed to date in controlled evaluations. This announcement is notable on its own, but it has also already triggered a scramble among regulators, financial institutions, and national security agencies. Worries about online safety, data security, and the resilience of critical infrastructure have risen sharply. At the same time, the legal and policy frameworks intended to govern such systems remain scattered, underdeveloped, and often disconnected from the technical reality they are meant to govern. This is not just another AI overhyped story. Mythos is a signal that the rift between what AI can do and what regulators are prepared to handle is increasing fast. To understand why Mythos matters, it helps to move beyond the headlines. Mythos is not simply a general‑purpose chatbot upgraded with a few extra features. It is a so‑called frontier model whose capabilities are being tested and discussed in the context of cyber‑offensive use: that is, the use of AI to find and exploit vulnerabilities in software, networks, and systems. Assessments from the UK AI Safety Institute suggest that Mythos may be capable of autonomously scanning and probing digital systems in controlled settings at a scale and speed beyond human operators. It can test browsers, operating systems, and services for weaknesses and, in some cases, suggest or assist in executing attacks. This is not purely hypothetical; it is being treated as a realistic benchmark for how powerful AI-driven cyber tools could become.

What Mythos actually is?

What sets Mythos apart from many previously discussed models is its open framing as a cyber‑offensive capability. Earlier concerns often focused on dual‑use systems that might be misused for surveillance, disinformation, or social‑media manipulation. Mythos brings the threat into the domain of infrastructure, security, and real‑world disruption. It signals that the frontier is no longer only about better text, better images, or even better customer-service chatbots, but about systems that could, in principle, be adapted to target the digital systems that underpin daily life.

The responses from governments and regulators already reveal two different reactions.

In the UK, the AI Safety Institute has issued public warnings and an open letter to businesses, emphasising that frontier AI capabilities are now advancing fast and that Mythos represents a substantial leap in cyber‑offensive capability compared to earlier models. The message is both technical and political: if one private company can build such a model, others will likely follow, and the public and private sectors must urgently prepare. The Bank of England’s governor has also called for rapid global assessments of how such tools could destabilise financial systems and critical infrastructure.

In the US, the reaction mixes shock, pragmatism, and a familiar political script. President Trump has publicly endorsed stronger AI safeguards, including a ‘kill switch’ to deactivate especially dangerous models. Treasury Secretary Scott Bessent has described Mythos as a step function change in capability, signalling that the US financial sector is already being told to treat this not as a future possibility, but as a present‑day risk. Large banks and tech firms are reportedly working with Anthropic under programs like Project Glasswing, which give selected partners access to find and patch vulnerabilities before they can be exploited.

What is striking in both cases is the rush to react rather than the presence of a coherent, long‑term governance framework. The UK leans toward public warning and assessment; the US leans toward control‑oriented, top‑down tools, such as the ‘kill switch’ idea, while still avoiding a broad, rules‑based approach to AI development. Neither approach yet feels like an answer proportional to the change Mythos represents.

Behind the headlines about capability and risk lies a more profound question: why was a model like Mythos built at all?

Anthropic’s stance appears to be that it has built Mythos, recognizes it is extremely dangerous, and has decided not to release it publicly. Instead, the company will control access, determine who can use it and under what conditions, and will use it to help partners find and fix vulnerabilities.

This framing also raises uncomfortable questions about power and legitimacy. Why is a private company effectively deciding:

Besides this clearly being a question about Anthropic’s ethics, from a governance perspective, it is also a question of who gets to set the boundaries on what kinds of AI capabilities are permissible in the first place. Are there any realistic mechanisms to prohibit the creation of models like Mythos, or will the default answer always be: ‘If someone can build it, they will, and then others will have to cope’?

This is where the disconnect between AI regulation and technical reality becomes especially visible. Existing laws and policy drafts often talk about transparency, bias, explainability, and social‑media manipulation. They rarely ask whether certain kinds of AI systems, in particular offensive, infrastructure‑targeting models, should simply not be built at all, or should be subject to strict licensing and oversight. Mythos forces that question to the surface.

So far, the Mythos story is being told in two narrow frames: as a national security issue for militaries and spy agencies, and as a corporate‑risk issue for banks and big tech companies. But the real story is broader. If a model like Mythos can autonomously probe and exploit digital systems, then the target list is not just classified servers or trading algorithms. It includes the everyday infrastructure that keeps modern societies running.

Electricity grids, water systems, public transport networks, EV‑charging fleets, airports, and urban data centres all depend on connected software, sensors, and control systems. These systems are built on code, protocols, and networks that can be probed and mapped, and in some cases may present exploitable weaknesses. Tools that can rapidly find vulnerabilities at scale tilt the balance and defenders must protect every weak point, while an AI‑assisted attacker only needs to find one.

This is not the same as saying that power grids will be taken down overnight or that every EV charger will be hacked. It is saying that the distribution and maintenance of risk is changing. The entities that used to control access to advanced cyber tools (intelligence agencies, specialized security firms, or criminal groups with long learning curves) are now joined by private AI labs that can centralize and generalize offensive capability in software. From a governance angle, this is a technical problem, as well as a systemic one.

This discussion is a direct continuation of the theme we explored in an earlier Diplo article, ‘The Gap between AI Regulation and AI Reality‘. Then, the focus was on how fast AI capabilities evolve while regulatory frameworks lag behind, often clinging to outdated ideas of trustworthy design and ethical use. Mythos shows that the gap is not only about fairness or transparency; it is also about offensive cyber capability embedded in privately controlled frontier models and about who gets to decide what level of risk is acceptable for the rest of us.

Mythos does not call for panic, but it does call for a more serious and explicit conversation about the kinds of AI systems that should and should not exist. It is not enough to add AI–safety clauses to financial‑stability statutes or to demand kill switches for extreme cases.

Policymakers and international institutions need to think more concretely about:

From a multi‑stakeholder regulatory standpoint, this is the moment where the distance between AI regulation and AI reality should be narrowed urgently. It is also the moment where institutions, engineering communities, and policy‑oriented technologists can help frame these governance questions beyond purely technical or national security perspectives.

Mythos is not just another model. It is a symptom of a bigger change: the large-scale automation of offensive cyber capabilities, held in private hands, emerging long before societies have agreed on what such tools should be allowed to do. It forces us to confront the uncomfortable truth that the actors with the most advanced knowledge of AI’s risks are also the ones least accountable to the public.

If the world does not take heed of what Mythos represents, it will have to live with the consequences of a race that we cannot afford to lose. For engineers, policymakers, and citizens alike, this is not a moment to retreat into technical mysticism or regulatory wishful thinking. It is a moment to insist that AI governance must be as concrete, explicit, and serious as the capabilities it is trying to manage.

Author: Slobodan Kovrlija