Consider what we love about sport. Not just the victory, but the almost-victory. The missed penalty that defined a career. The marathon runner who collapses metres from the finish line and gets back up. The underdog team that loses gloriously. We don’t only tolerate failure in sport. We need it. Without the real, ever-present possibility of failing, the win means nothing. We understand this intuitively when we watch a game. What we seem less willing to acknowledge is that the same logic applies to most of what makes us human. We celebrate excellence in sport precisely because it is built on human imperfection. Even a technically perfect robotic athlete could not replicate the shared histories, relatable failures, and hard-won mastery that make human athletic achievement meaningful. We already know, in other words, that removing failure removes meaning. So if we understand in sport that failure is essential, why are we so comfortable removing it from other parts of life through AI? As generative AI tools become woven into education, professional life, and creative practice, they are doing something no previous technology quite managed: they are making failure genuinely optional. And that should give us pause.

For decades, psychologist Carol Dweck’s research on mindset has illuminated something that most of us sense but rarely articulate clearly: failure is not the opposite of growth, it is its engine. In her framework, people who believe their abilities can be developed (what she calls a growth mindset) don’t just recover better from failure; they actively seek out the kind of difficulty that makes failure possible. They understand, even if not consciously, that there is no other way to get better.

This insight goes deeper than motivation. Neuroscience has shown that the actions taken in the face of difficulty (trying new strategies, asking for help, persisting through uncertainty) physically strengthen neural connections. The brain, quite literally, is built by the experience of struggling. Comfortable, frictionless success leaves fewer marks. It is the encounter with difficulty, with the genuine risk of getting something wrong, that shapes us.

Beyond cognitive development, failure is also how we come to know ourselves. Who we are under pressure, how we respond to disappointment, what we are willing to try again after being knocked back: these are not abstract qualities. They are forged through experience. A person who has never genuinely failed at something meaningful is missing a large and important part of their self-knowledge. They have never had to find out what they are made of.

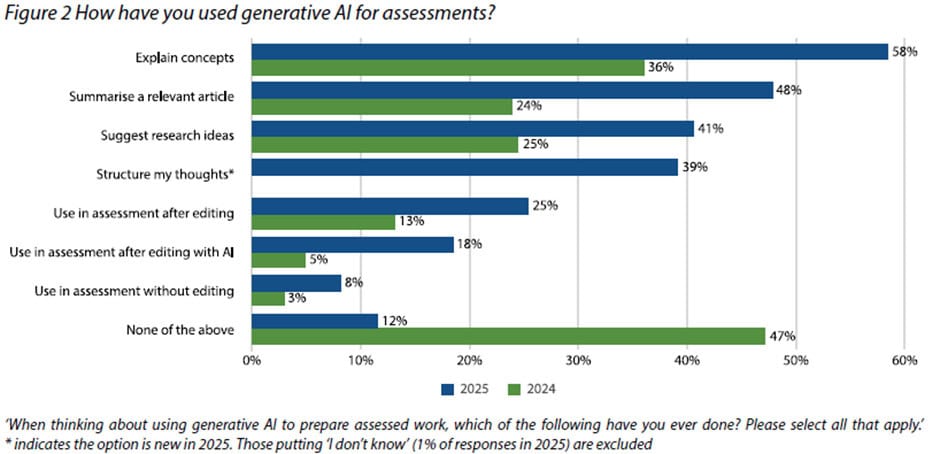

The data emerging from education is striking. A 2025 experimental study on the use of ChatGPT in academic writing found significantly lower cognitive engagement among AI‑assisted students, suggesting that AI fluency encourages ‘cognitive offloading’ and a form of cognitive complacency. According to the UK’s Higher Education Policy Institute, some 92% of students were actively using AI by 2025, with 88% having used it to complete assignments.

Stanford professor Mehran Sahami put it starkly:

AI has broken a core assumption of education. Strong outputs no longer indicate strong learning.

Researchers at the Brookings Institution have gone further, finding that heavy use of AI weakens what they call ‘learning mindsets‘. Students develop unrealistic expectations about ease, lose opportunities to build resilience and grit, and become less willing to engage in the productive struggles that lead to authentic learning. In short, they are being robbed of the very experiences that would build them.

This is not unique to education. A professional who uses AI to draft every difficult email never has to find the words themselves. They never have to sit with the discomfort of not knowing how to say something hard and work through it. A designer who generates concepts instantly skips the frustrating blank-page hours that, research consistently shows, are where original thinking actually happens. A programmer who has AI debug their code may ship faster, but they build no intuition for why things break. The output arrives before the struggle that would have made the person more capable.

This is subtly different from other forms of assistance. A calculator didn’t prevent you from understanding mathematics. You still had to construct the problem, interpret the result, and know when something went wrong. A spell-checker didn’t write your sentences.

But generative AI can now take on the full cognitive task: the thinking, the structuring, the execution, leaving the human in the position of reviewer rather than creator. The struggle that would have built something in you simply doesn’t occur. This outsourcing of agency touches on the core of digital sovereignty. The ability of individuals to remain masters of their own cognitive processes.

There is something even more fundamental at stake than skill development. Success feels meaningful partly because failure was possible. This is not sentiment. It is structural. The value of an achievement is inseparable from the reality of the risk that you might not achieve it. A game where the outcome is predetermined isn’t a game. An exam you cannot fail isn’t a test of anything. An essay written entirely by AI and submitted under your name isn’t your essay in any meaningful sense.

We are beginning to see this play out in ways that are hard to name but easy to feel. Students report feeling oddly hollow about work they have produced with heavy AI assistance, even when the result was praised. Professionals describe a growing sense of disconnection from their output, a creeping uncertainty about what they actually know versus what they have simply asked a machine to tell them.

The philosopher Michael Sandel has long argued that markets corrupt goods by changing what they mean: buying your way out of something valuable changes its nature entirely. AI may be doing something similar to effort.

There is also the question of identity. We know ourselves through the accumulation of what we have done and how we responded when things went wrong. The first time you had to apologise genuinely, and didn’t know how. The project that failed, and what you learned from it. The skill you built slowly, and the frustration that accompanied it. These experiences are not incidental to who you are. They are constitutive of it. A life carefully optimised to avoid failure is also, in some important way, a life that has avoided becoming.

None of this is an argument against AI. Like every previous tool, it is neither the problem nor the solution. It is an amplifier of our choices. The question is whether we are making those choices consciously.

In education, this means rethinking what we are actually trying to do. If the goal of an essay assignment is to produce a well-structured argument, then AI assistance undermines the goal. If the goal is to develop the student’s capacity to think, structure, and argue (which it should be), then handing that cognitive work to a machine defeats the entire purpose. Educators are not wrong to be alarmed, and institutions are not being reactionary when they insist on spaces where students must think without assistance. Those spaces are not obstacles to learning; they are the learning.

In professional life, this means cultivating what might be called intentional difficulty. Choosing, sometimes, to write the hard email yourself rather than delegating it to AI. To sit with a problem before reaching for the shortcut. To build the skill rather than buy the output. Not because efficiency doesn’t matter, but because capability and judgment are built through encounters with difficulty, and they cannot be purchased.

In creative life, this means remembering that the struggle is not separate from the work. It is part of the work. The blank page, the failed draft, the piece that doesn’t come together until the sixth attempt: these are not inefficiencies to be optimised away. They are where the maker encounters themselves, where something genuine is being forged. An AI can produce a polished result. It cannot produce the growth that comes from the effort of reaching for one.

Perhaps most urgently, this means thinking carefully about children. The first generation growing up with AI as a natural presence may also be the first to reach adulthood having avoided entire categories of useful failure: the frustration of learning something slowly, the embarrassment of a poor attempt, the satisfaction of getting something right after many times getting it wrong. We should be deliberate about what we are protecting them from and what we are protecting them for.

We return, in the end, to sport. We could, in principle, build AI systems that play every game with perfect strategy. We have not done so, or where we have, we watch the AI play for a while with curiosity and then stop watching. It is not actually interesting. What is interesting, what is meaningful, is the human version: imperfect, uncertain, capable of failing, and therefore capable of something we recognise as triumph.

This is what humAInism insists on: that the measure of good technology is not whether it removes all difficulty from human life, but whether it leaves room for the kind of difficulty that makes us more fully human. There is a version of an AI-assisted future that is comfortable, efficient, and oddly diminished: one where people produce impressive outputs they didn’t build, where credentials reflect skills that were never developed, where the hard and necessary work of becoming is streamlined out of existence.

And there is another version, in which AI handles the genuinely tedious (the reformatting, the summarising, the mechanical) while humans are freed to engage more deeply with the things that are actually hard: the thinking that requires struggle, the work that builds judgment, the attempts that might fail and therefore might genuinely succeed. That version requires us to be conscious about what we protect.

The game is worth playing because we might lose. The work is worth doing because we might not get it right the first time. Life is worth living because it is genuinely ours, shaped by what we tried, what we failed at, and what we learned in the process. These are not things we should be in a hurry to hand over.

Author: Slobodan Kovrlija